Kate Garraway’s Struggle with Haringey Council Correspondence for Late Husband

Kate Garraway’s Struggle Kate Garraway has revealed the ongoing challenge of receiving mail intended … Read more

55,000 Vehicles Toyota Recalls Over Rear Door Issue

Toyota Recalls 55,000 Vehicles Over Rear Door Issue Toyota recalls approximately 55,000 vehicles in … Read more

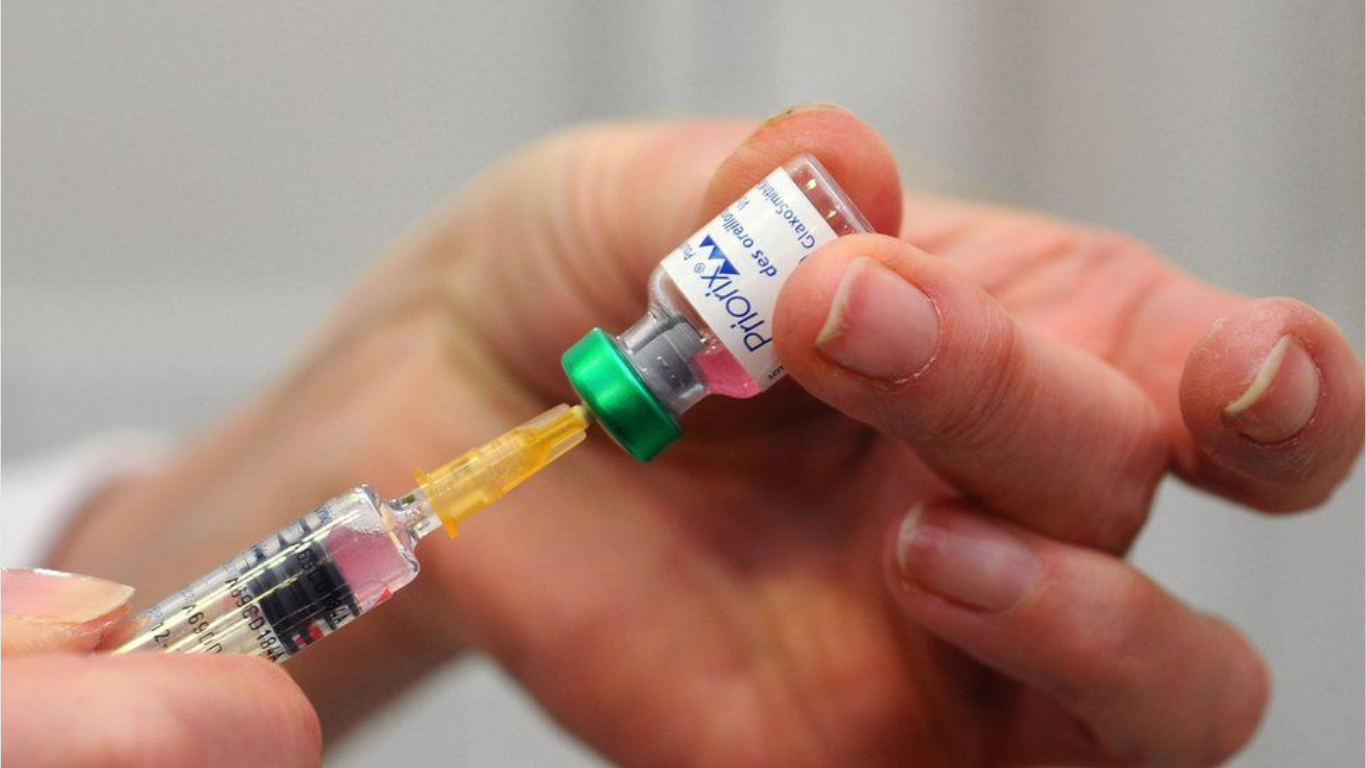

Pastor Chris Oyakhilome’s Anti-Vaccine Statements Risk Malaria Vaccine Uptake in Africa

Nigerian Pastor Chris Sparks Concern Over Anti-Vaccine Campaign In a recent sermon broadcasted on … Read more

Rapper NBA YoungBoy Arrested in Cache County for Alleged Involvement in Extensive Prescription Fraud Scheme

Rapper NBA YoungBoy Arrested in Utah on Suspicion of Multiple Offenses Rapper NBA YoungBoy … Read more

Toronto Raptors Player Jontay Porter Ban From NBA for Life Amid Betting Scandal

Toronto Raptors Player Jontay Porter Ban From NBA ESPN first reported on the investigation … Read more

Arrest Made in Salman Khan Shooting Incident: Suspects Confess to Gang Affiliation

Salman Khan: Two people arrested for firing at Bollywood star Arrest of Suspects in … Read more

Unprecedented Dubai Flooding Disrupts Airport Operations

Dubai flooding: Unprecedented Rainfall Causes Widespread Dubai flooding, United Arab Emirates, is grappling with … Read more

Nostalgia Reigns Supreme: Celebrating 50 Years of Little House on the Prairie

“Little House on the Prairie” 50th Anniversary Festival If you grew up between the … Read more

Former US Senator and Governor Bob Graham Passes Away at 87

Bob Graham Dies at 87 Former US Senator and Governor Passing of a Political … Read more